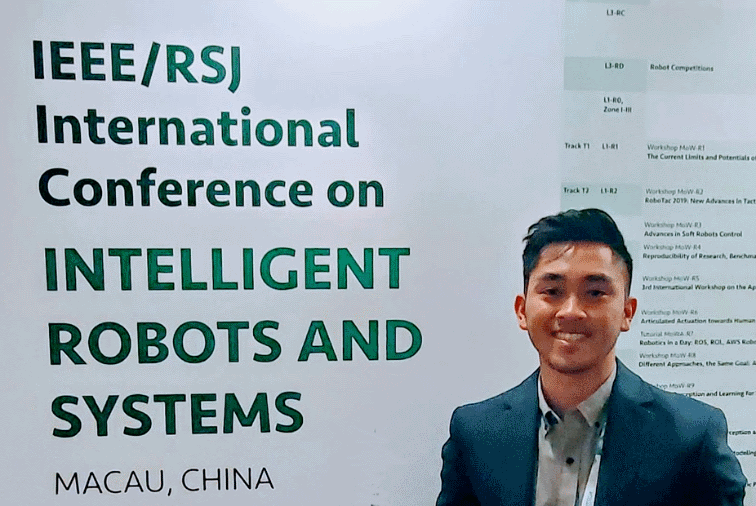

Squishy Robotics’ Director of Controls Engineering Brian Cera delivered a paper at the IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). The multi-day conference attracted many of the world’s leading robotics experts and educators; for the first time, the annual IROS conference was held in Macau, China.

Cera’s paper, which was co-authored by Squishy Robotics’ CEO Dr. Alice Agogino and former Squishy Robotics’ Engineer Anthony Thompson, was titled: “Energy-Efficient Locomotion Strategies and Performance Benchmarks using Point Mass Tensegrity Dynamics.” The paper details a new approach for developing and controlling tensegrity structures. It describes how Squishy Robotics’ novel motor and paired cable actuation scheme achieve rolling locomotion with spherical tensegrities. This patent-pending work improves the speed, energy efficiency, and directional trajectory-tracking accuracy of our Mobile Robot.

Approximately 3,500 people attended this year’s annual IROS conference, which is now in its third decade. “It was a great opportunity to be able to present some of our latest work,” Cera said. “I learned so much, too. International experts in robots and intelligent systems were at the conference. Getting to listen to inspiring keynotes and seeing several familiar faces in the research community was energizing.”

“The interesting work in robust controls and motion planning that I saw at IROS will undoubtedly inform and influence new advances at Squishy Robotics,” Cera stated.

Abstract:

This work presents a model-based approach for creating robust control policies for rolling locomotion with a spherical tensegrity topology. Utilizing the structured dynamics of Class-1 tensegrity systems, we turn to model predictive control (MPC) to generate optimal multi-cable actuation trajectories for dynamic rolling. Although the resulting multi-cable state-action trajectories successfully outperform the benchmark single-cable policy performance in speed, computational constraints prevent MPC from being applied in real-time. To address this, we demonstrate that a contextual policy trained using supervised deep learning on the generated optimal MPC trajectories can be used as an end-to-end feedback policy for real-time directed rolling locomotion.